TL;DR: The midgame meltdowns weren’t random. They came from three predictable causes—state drift, context dilution + recency bias, and a training-data gap beyond the opening. Kaggle already sent a canonical board state (FEN) every turn and enforced legality. What still stops most failures is forcing state reconciliation (have the model echo & fix the board before planning) and returning reasons for illegal retries; optional visual cross-check helps.

The moves that gave the game away

Let’s start with the positions, because the positions tell the story.

1) “Rh1–h2” through your own pawn

In one game, the model (White) seriously considered Rh1–h2 while a white pawn stood on h2. Any human glance says “illegal.” Why did the model even float it?

-

In a text-only pipeline, the model tracks the game as words (PGN, short notes).

-

In a text-only setup, the model rebuilds the board from symbols each turn. A small bookkeeping slip can desync that mental board, so it simply forgets the h2 pawn and “sees” Rh1–h2 as legal.

-

Result: its mental board forgot the h2 blocker, so Rh1–h2 looked fine.

That’s state drift: the board in the model’s head diverges from reality.

2) “Move the rook to g1”—but it grabbed the wrong one

Faced with a simple defensive idea (R?g1), the model shifted the other rook sideways instead.

-

It had the right plan (put a rook on g1)

-

…but bound the command to the wrong piece.

That’s pointer misbinding: a specific piece reference gets lost in the soup of text tokens. In a proper board representation, each rook is an object with an ID; in chat, they’re just “rooks,” and the association can slip.

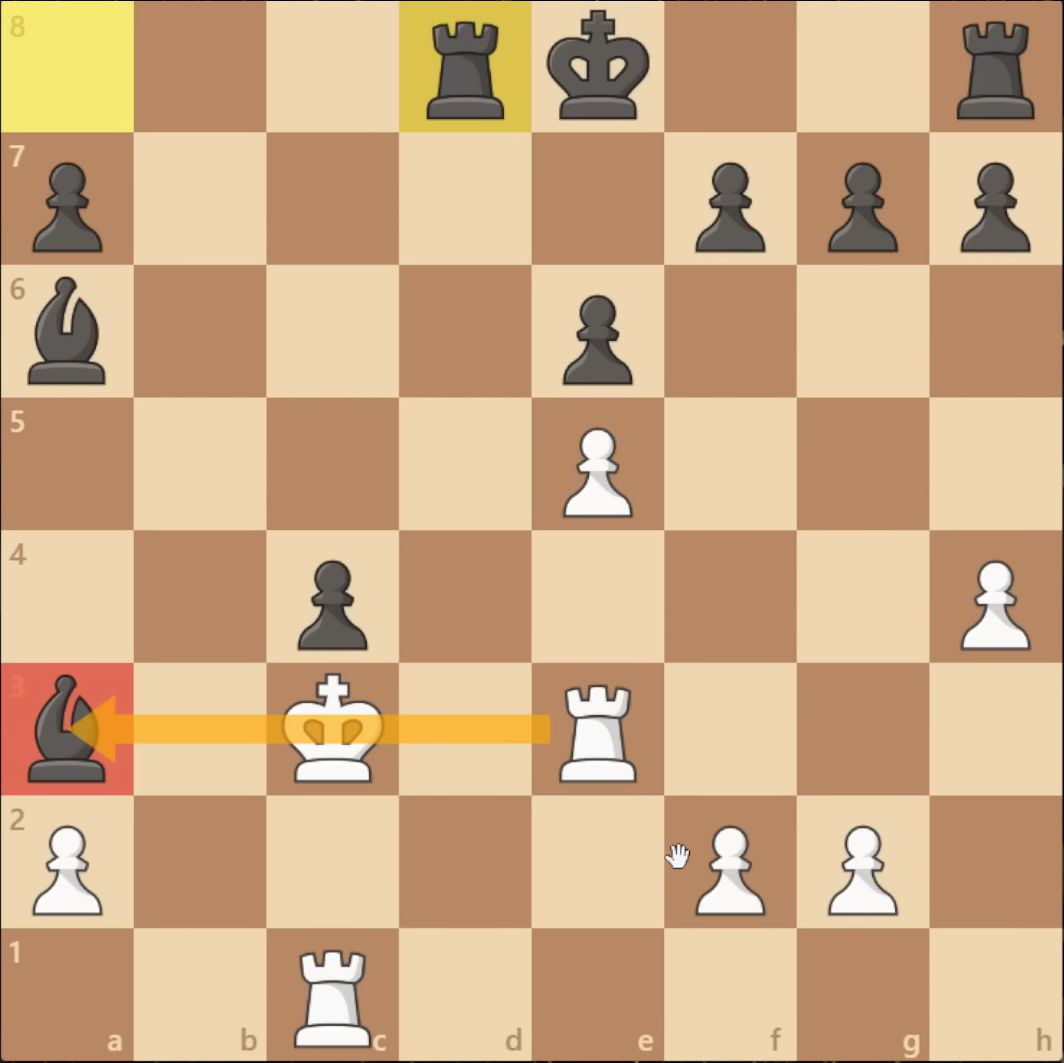

3) “Re3xa3” into your own king and pawn

Later, White considered Re3xa3 even though the white king on d3 (and a pawn near c3/c4) blocked the rook’s path to a3.

-

Rewind a few moves: the king stepped up-right, then back down; a pawn advanced.

-

Somewhere in that tiny sequence, the model’s internal board failed to put one of those blockers back correctly.

-

Fast-forward: it now sees a clean e3→a3 line and confidently proposes a capture that cannot exist on the real board.

That’s a line-of-sight hallucination caused by earlier temporal desync. And because nothing forces it to re-check reality, it will often retry the same illegal idea until the framework times it out.

How the Kaggle harness worked (quick facts):

• Input each turn: FEN + SAN history; model returns a SAN move.

• No images or external tools; text-only I/O.

• Rules enforced by a referee (up to 3 retries on illegal moves, then forfeit).

• No legal-move list provided in-prompt; models inferred legality themselves.

Fix it with three small, practical steps:

Canonical state every turn (source of truth).

Feed the model a structured board (FEN/JSON square→piece) on each ply. Ask it to echo the board back before planning. If its echo disagrees, force reconciliation (“List mismatches and correct them.”) Then let it think.

Legality guard (non-negotiable).

Run the chosen move through a rules engine (as Kaggle did). Also return a concrete reason (“Path from e3 to a3 is blocked by your Kd3.”). Kaggle’s flow typically said only “illegal, try again,” which explains the repeat-illegal loops; adding reasons breaks that loop.

Optional visual cross-check.

(The tournament was text-only; no images were sent to models. This is a proposed improvement.)

Also pass a rendered board image and ask: “Call out any mismatches between the image and the JSON board.” Vision grounds text; the JSON remains the truth source.

Nice-to-haves that help a lot:

-

Pinned rule card that never scrolls out: “Verify checks, pins, path blockers, side to move; output SAN + one-line rationale.”

-

Micro-search for sanity (very shallow engine probe) used only for legality and one-move tactics, not to play the whole game.

What you should see when you add guardrails

-

Illegal-move rate plummets; with reasons returned on illegals, repeat-illegal attempts go to ~0.

-

A simple state-consistency error (model’s echoed board vs truth) becomes a leading indicator of blunders 1–2 plies later.

-

Dual-input (JSON + image) catches rare desyncs that text alone misses.

-

Midgame accuracy stabilizes even with verbose reasoning.

Beyond chess

This isn’t just a chess story. Any symbolic, stateful task (workflow automation, multi-step tool use, code refactors) will show the same pathology: state drift + instruction decay + shallow priors → confident nonsense. The cure is the same: pin the state, validate actions, ground with sensors.

If you want to reproduce (or publish)

-

Log (a) the canonical state you fed, (b) the model’s echoed state, (c) the attempted move, and (d) the legality verdict.

-

Start with three ablations over ~20–50 games each:

-

Text-only vs Canonical State vs + Legality Guard (illegal-move rate & time-to-first-collapse).

-

Pinned rules on/off (repeat-illegal count).

-

Dual-input on/off (state-consistency errors caught).

-

You don’t need a super-engine to see the effect. The guardrails are the win.

One-sentence takeaway

LLMs didn’t fail because “AI can’t play chess.” They failed because we asked a next-token predictor to do strict, stateful reasoning without giving it a state—and without checking its work.